Scaling distributed training with AWS Trainium and Amazon EKS

AWS Machine Learning

FEBRUARY 1, 2023

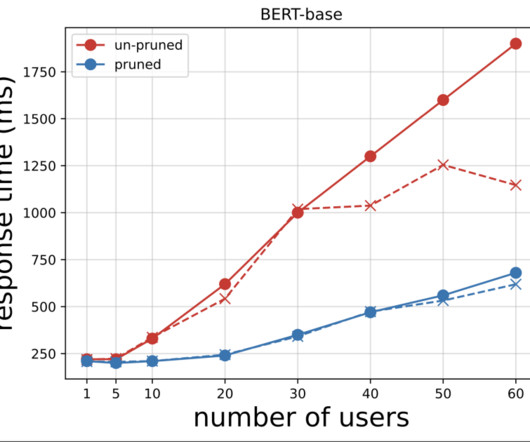

Although larger models tend to be more powerful, training such models requires significant computational resources. Even with the use of advanced distributed training libraries like FSDP and DeepSpeed, it’s common for training jobs to require hundreds of accelerator devices for several weeks or months at a time.

Let's personalize your content