Accelerate Amazon SageMaker inference with C6i Intel-based Amazon EC2 instances

AWS Machine Learning

MARCH 20, 2023

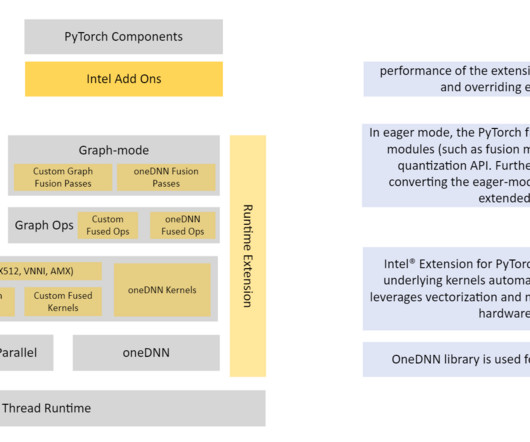

In this post, we show you how to build and deploy INT8 inference with your own processing container for PyTorch. Use the supplied Python scripts for quantization. Run the provided Python test scripts to invoke the SageMaker endpoint for both INT8 and FP32 versions. py scripts for testing. Refer to invoke-INT8.py

Let's personalize your content