Fast and cost-effective LLaMA 2 fine-tuning with AWS Trainium

AWS Machine Learning

OCTOBER 5, 2023

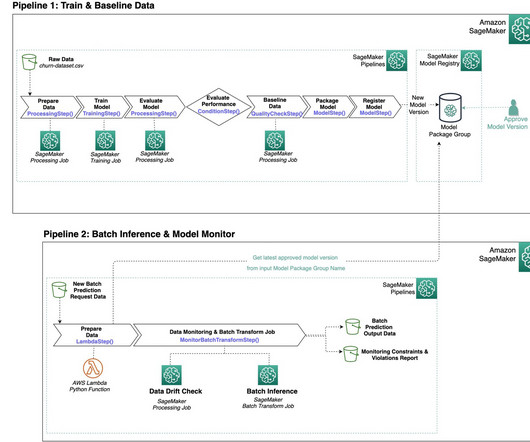

We review the fine-tuning scripts provided by the AWS Neuron SDK (using NeMo Megatron-LM), the various configurations we used, and the throughput results we saw. Compared to Llama 1, Llama 2 doubles context length from 2,000 to 4,000, and uses grouped-query attention (only for 70B). 4096 2 8 4 1 256 7.4. 4096 4 8 4 1 256 14.6.

Let's personalize your content